The Agent-First Thesis

Every piece of software in production today was designed around a single assumption: a human is the user. The onboarding teaches a person the interface. The pricing charges per person using it. The marketing shows screenshots because a person needs to visualise the product. The documentation guides a person through workflows sequentially.

This assumption held for 40 years but it's starting to break.

The usage data tells a story

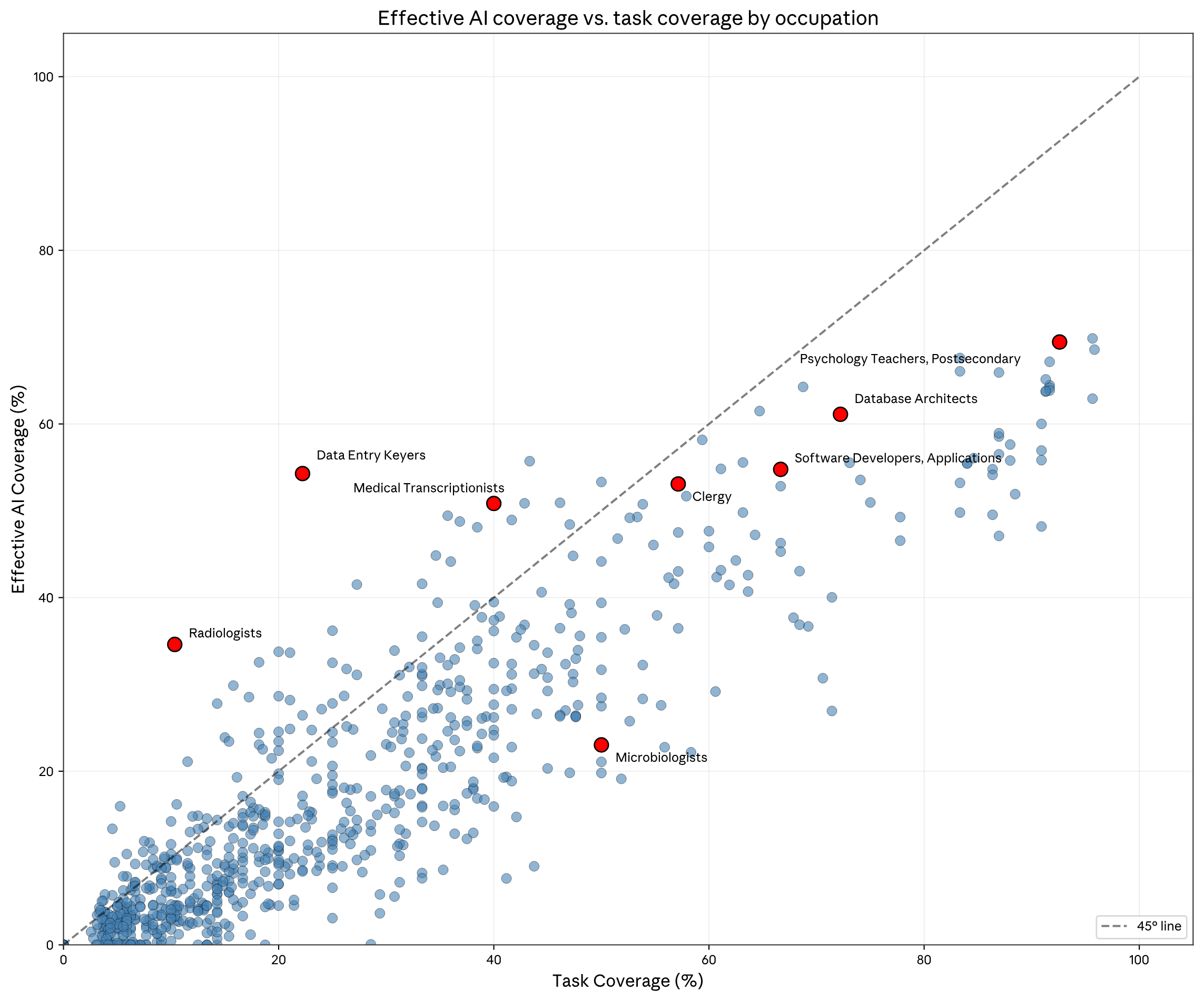

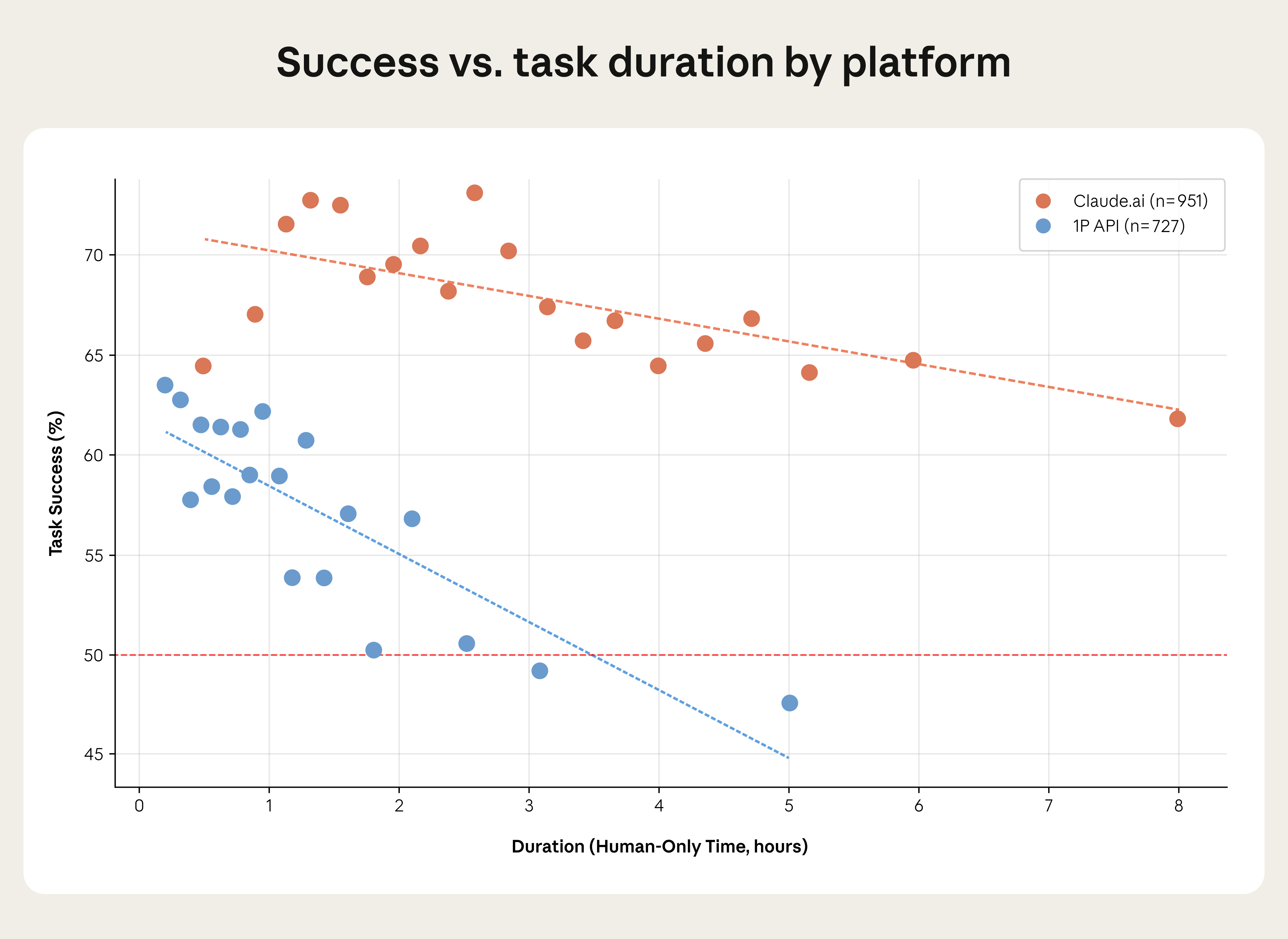

According to the Anthropic Economic Index, 49% of occupations now have at least a quarter of their tasks performed using AI, based on February 2026 data. More importantly, the nature of that usage is shifting. Their data shows automation (agent does the task) is at 45% of all Claude interactions, nearly tied with augmentation (agent helps the human do the task) at 52%. A year prior, augmentation dominated, which shows interesting signs towards the convergence trajectory.

When an agent performs a task on behalf of a human, it becomes the user of whatever tool that task requires. The human set the goal, but the agent is the one interacting with the software. This distinction matters because the software was designed for the human, and the agent is now working around design decisions that were never made with it in mind.

The full stack of human-centred design

The mismatch goes far deeper than the graphical interface. Modern software design is built around human cognition at every level. Progressive disclosure manages complexity so a person doesn't feel overwhelmed. Onboarding flows exist because humans have learning curves. Confirmation dialogs protect against human mistakes. Session timeouts protect against humans walking away.

For agents, all of this is irrelevant at best and obstructive at worst. A confirmation dialog interrupting an automated workflow is a bug, an onboarding tooltip is noise and a multi-step wizard that forces sequential navigation through pages is an unnecessary constraint.

But the human-centred assumptions run deeper than the UI. They exist in the data model (structured around how humans think about the domain), API surface (frequently an afterthought, exposing less capability than the GUI), documentation (written in prose for human comprehension rather than structured formats for machine parsing), and the pricing model. Every layer was designed for a human user, and every layer carries assumptions that don't hold when the user is an agent.

The adapter approach and its limits

The standard response to this mismatch is translation layers. API wrappers, MCP integrations, browser automation, screen-scraping pipelines. Each adapter bridges the gap between what an agent needs (structured input, predictable output, inspectable state) and what existing software provides.

These translations are inherently lossy. For example, the GUI typically exposes more functionality than the API or a screen scraper captures visual layout instead of semantic meaning. Each adapter introduces its own failure modes and maintenance burden. When the underlying product updates its interface or API, the adapters risk breaking silently.

Bain's 2025 Technology Report identifies "human workflow and user interface dependency" as one of six key indicators for how vulnerable a software category is to agent disruption. The more a product depends on human-centred interaction patterns, the harder it is for agents to operate it effectively, and the more opportunity exists for a tool built differently.

The adapter approach is viable for simple operations. For complex, multi-step workflows where an agent needs to read state, make decisions, take actions, and verify outcomes, the translation overhead compounds with each step.

When agents become the customer

The shift from agent as user to agent as customer is the part most people haven't fully considered yet.

Gartner predicts that by 2028, 90% of B2B buying will be AI agent intermediated, with over $15 trillion of B2B spend flowing through agent exchanges. They describe these as machine customers: nonhuman economic actors that evaluate, select, and transact. By 2030, Gartner estimates machine customers will directly participate in or influence $30 trillion worth of purchases.

This changes the competitive dynamics of software markets. Today, a human evaluates your product through a landing page, a demo, a free trial. They form subjective impressions. Brand, visual design, and sales relationships all matter. Tomorrow, an agent evaluates your product programmatically. It will test whether it can read state, perform operations, and verify outcomes. It will compare your tool against alternatives based on reliability, capability, and how well it accomplishes the task its human asked for. The agent will choose the tool that makes it easiest to do excellent work for its human.

When the buyer is a machine, the product attributes that matter change:

- Machine-readable state matters vs visual polish.

- Predictable interfaces vs intuitive onboarding.

- Structured documentation vs marketing copy.

- Deterministic behaviour vs delightful microinteractions.

IDC predicts that by 2028, 70% of software vendors will have refactored their pricing strategies away from seat-based models. The reason is straightforward: when agents are the primary users, the concept of a seat stops making sense. Pricing will reflect usage, outcomes, or capability instead.

The infrastructure precedent

Infrastructure went through a structurally similar transition over the past 15 years. Before Terraform, server provisioning meant navigating cloud provider dashboards manually. Before Docker, environment setup meant following screenshot-heavy runbooks. Before CI/CD, deployment was a manual process involving file transfers and checklists.

Each of these GUI-dependent workflows was replaced by declarative, text-based, automatable alternatives. The human authoring experience often got worse in the transition (YAML is nobody's favourite format). But the operational properties improved so substantially that the industry adopted them anyway.

The properties that made infrastructure-as-code win are the same properties that agent-first software needs: versionable state, meaningful diffs, repeatable operations, composable primitives, and native automation support. The industry accepted worse human ergonomics because the machine-friendly properties were operationally more valuable. The same trade-off logic applies beyond infrastructure, across every category where agents are becoming the primary operators.

The rebuild question

The common response from existing software companies is to retrofit agent support: add an API, ship an MCP integration, build a chatbot in the sidebar. This approach preserves the existing architecture and customer base, which is why incumbents gravitate toward it.

The structural problem is that the core product's assumptions about the user being human are embedded throughout the stack. Retrofitting agent support onto a human-centred product means the agent always gets a second-class experience, constrained by decisions that were made for a different type of user.

SaaS categories most vulnerable to disruption share common characteristics: externally observable workflows, standardised processes, and high dependency on human-centred UI. The categories most resistant have deep proprietary data moats and high switching friction. For the large space in between, the opportunity exists for tools that are purpose-built with agents as a primary user.

2026 has been a breakthrough year for multi-agent systems, where specialised agents collaborate under central coordination. When agents start orchestrating other agents to accomplish complex tasks, the tools they reach for will be the ones built for that context. Software that requires human navigation or visual interpretation to function won't participate in those workflows.

The 18-month question

If you're building software right now, the question worth asking is: who will actually be using this product in 18 months?

If the answer is increasingly an agent acting on behalf of a human, then the design priorities invert. The agent doesn't evaluate your product the way a human does. It evaluates whether it can read state, perform operations reliably, and verify outcomes for the task its human asked it to complete. The human still matters, but the human's role is shifting toward reviewing the agent's work and setting goals rather than operating the tool directly (already heavily seen in software development with the use of coding agents).

Building for this future means designing the machine-readable interface first and layering the human experience on top. The tools that get this right will be the ones agents select when given a task. The tools that don't will depend on increasingly complex translation layers to remain accessible in an agent-intermediated world.

We've been running agent orchestration in production at Frequency for several weeks. The patterns we've observed in how agents struggle with existing tools, and what they need from the tools they work with, are shaping what we build next. The transition to agent-first software is already in progress across developer tooling and infrastructure. We believe the rest of the software stack follows the same path.

The agent-first thesis is already underway. The question is which categories and which tools will define it.